Building Your AI Startup Tech Stack: A Founder's Essential Guide

K R

Days AI

SalesDay

An AI sales acceleration system that identifies high-intent prospects, automates outreach, and optimizes follow-ups to increase conversions.

Building Your AI Startup Tech Stack: A Founder's Essential Guide

Your first product decision isn't the user interface. It’s the technology stack powering everything underneath. For an AI startup, choosing the right AI startup tech stack carries immense weight. A wrong turn here doesn’t just create technical debt; it can cap your growth potential before you launch. The infrastructure for a standard SaaS app can't handle the demands of modern AI. You need a different blueprint for your AI startup tech stack.

This founder's guide tech stack provides that blueprint for founders. We'll move past generic tool lists to focus on the architectural patterns that enable rapid, sustainable scaling and form a robust scalable AI infrastructure. To make this concrete, let's imagine we're building 'StyleSync,' an AI-powered personal shopping app. It gives real-time fashion recommendations from user photos. We'll use StyleSync's needs to illustrate our tech stack choices.

Unveiling the architecture of innovation: Our AI tech stack, a complex yet meticulously structured foundation for intelligent solutions.

Navigating Key Trends: Cloud-Native, Serverless & MLOps

The right blueprint for an AI startup tech stack rests on three pillars: cloud-native design, serverless AI architecture, and rigorous MLOps best practices. These aren't buzzwords. They are strategic choices that directly impact your ability to scale, control costs, and ship reliable AI products.

Cloud-Native as the Foundation

Building cloud-native means your applications are built for the cloud, not just on it. This approach uses containers (Docker) and orchestration (Kubernetes) to package your AI models. The result is a portable and resilient system. You can move workloads between cloud providers without a complete rewrite, avoiding expensive vendor lock-in.

The Rise of Serverless AI Architecture

A serverless AI architecture lets you run code without managing servers. You pay only for the compute time you use. Imagine StyleSync has an AI feature to generate outfit ideas. With a serverless approach, compute resources activate only when a user requests a new look, then shut down. This model cuts costs for unpredictable workloads, making serverless AI architecture a strategic choice. An O'Reilly survey found 62% of organizations adopt serverless for its cost-saving benefits.

MLOps Best Practices: Non-Negotiable for AI Startups

Machine Learning Operations (MLOps) is the assembly line for your AI models. Adopting MLOps best practices is non-negotiable for AI startups. It automates the entire model lifecycle, from data ingestion to deployment. AI models aren't static; they degrade as data changes. MLOps provides the framework to retrain and redeploy models systematically. Without it, you're building models by hand—a slow and unscalable process.

TrendWhat It IsKey Founder BenefitCloud-NativeBuilding applications in portable containers managed by orchestrators.Avoids vendor lock-in and improves resource management.ServerlessExecuting code based on events without provisioning servers.Reduces operational costs, especially for variable workloads.MLOpsApplying DevOps principles to the machine learning lifecycle, embodying MLOps best practices.Ensures model reliability and enables rapid, scalable deployment.The Intersecting Realms: Cloud-Native, Serverless, and MLOps Converging

Core Pillars of an AI Startup Tech Stack: Data, Development & Deployment

Cloud-native, serverless, and MLOps are applied across three functional pillars: Data, Development, and Deployment. These form the backbone of any scalable AI infrastructure. Getting each pillar right is essential for your overall AI startup tech stack. A failure in one area will undermine the entire system.

The Data Foundation

Your AI models are only as good as their data. The data pillar covers everything from ingestion to transformation. For StyleSync, our data foundation must handle diverse data types. We'd use Amazon S3 for raw user-uploaded images (unstructured data). User profiles and transaction history would live in a relational database like PostgreSQL. Most critically, to find visually similar clothing items, we'd use a vector database like Pinecone to store image embeddings. This powers our core recommendation feature.

The Development Engine

This is your AI research lab. It's where your team builds, trains, and validates models. An effective development environment enables rapid experimentation. A poor one creates bottlenecks. Your goal is to make it easy for your team to test new ideas quickly.

A strong development pillar includes tools for experiment tracking (like MLflow) and model versioning. It also requires on-demand access to compute resources, especially GPUs. Using containers like Docker ensures a model works the same on a developer's laptop as it does in the cloud.

The Deployment Pipeline

Getting a model into production is where many AI initiatives fail. A report from Algorithmia found that companies can take over seven months to deploy a single machine learning model. An automated deployment pipeline, built on MLOps best practices, is the solution. This pillar connects your development engine to your live application.

You'll use CI/CD tools to automate testing and release. You will also choose a serving strategy. You might use a serverless AI architecture with AWS Lambda for models with intermittent traffic. For complex, high-traffic models, Kubernetes provides more control.

PillarPurposeExample TechnologiesKey GoalDataIngest, store, and process all data for training and inference.Amazon S3, Pinecone, PostgreSQLCreate a reliable, high-quality data source.DevelopmentBuild, train, and experiment with AI models.MLflow, Docker, NVIDIA GPUsAccelerate the model creation lifecycle.DeploymentServe models to users and monitor their performance.Kubernetes, AWS Lambda, CI/CD toolsAutomate release and ensure model reliability.

Strategic Framework for Your Scalable AI Infrastructure

Knowing the core pillars is the first step. Choosing the tools is the next. Without a clear framework, founders often pick tools based on hype or familiarity. This founder's guide tech stack aims to prevent that, ensuring every tool serves a purpose. A strategic approach ensures every tool serves a purpose, leading to a cohesive and scalable AI infrastructure.

Prioritizing Scalability for Your Scalable AI Infrastructure

For an AI startup, scalability isn't just about handling more web users; it's about handling more predictions. For StyleSync, if we go from 1,000 to 100,000 users, the inference load on our recommendation model grows exponentially. Your architecture must scale GPU resources for inference, not just web servers. A serverless AI architecture for model endpoints or a Kubernetes setup with GPU auto-scaling becomes critical for a truly scalable AI infrastructure. This prevents bottlenecks as your user base grows.

Calculate Total Cost of Ownership (TCO)

The sticker price of a tool is only part of the story. A founder must consider the AI-specific Total Cost of Ownership (TCO). This includes:

GPU compute costs: For both model training (large, infrequent jobs) and real-time inference (constant, smaller jobs).

Data acquisition & labeling: Costs for sourcing data and using services like Scale AI for annotation.

Specialized talent: The higher cost of hiring MLOps engineers vs. general DevOps.

Managed service premiums: The cost of using platforms like Databricks or managed vector databases over self-hosting.

Flexera's 2023 State of the Cloud report found that organizations estimate their cloud waste is 28%. Choosing managed services can often lower TCO by reducing this operational overhead.

Focus on Ecosystem and Integration

Your AI startup tech stack is a system, not a collection of tools. Each component must communicate effectively. A machine learning model is useless if it can't access the data warehouse. A CI/CD pipeline fails if it can't connect to your container registry. Always evaluate how a new tool fits your existing MLOps best practices workflow.

Evaluation CriteriaKey Question for FoundersImpact on Your StackScalabilityCan this tool handle 100x our current workload without a redesign, ensuring a truly scalable AI infrastructure?Determines long-term viability and prevents future re-platforming.**Total Cost (TCO)**What are the hidden costs of maintenance, integration, and training?Reveals the true financial impact beyond the subscription fee.Ecosystem FitDoes this tool integrate easily with our existing data and deployment pillars?Reduces engineering friction and accelerates time-to-market.Team AlignmentDoes my team have the skills to use this, or can we hire for it?Ensures the tool is actually adopted and used effectively.

This framework moves the conversation from "what's the hottest tool?" to "what's the right tool for our business?" Applying these criteria helps you build a cohesive and scalable AI infrastructure. It's the difference between a tech stack that fuels growth and one that becomes a bottleneck.

Your AI startup tech stack is your first major strategic decision. This founder's guide tech stack emphasizes that it's not just about choosing tools; it's about architecting the engine for your AI product. The right choices create a foundation for speed and innovation, not technical debt.

Think back to the core pillars: a solid data pipeline, agile development environments, and a robust deployment strategy. Mastering these areas, especially adhering to MLOps best practices, is how you build a durable company. Your future scalable AI infrastructure begins with the architectural choices you make today. Ultimately, this founder's guide tech stack will shape your AI startup tech stack for success.

Ready to add a powerful growth engine to your tech stack? Discover how MarketDay's AI automates your entire content strategy, from research to SEO-optimized posts, freeing you to focus on your product.

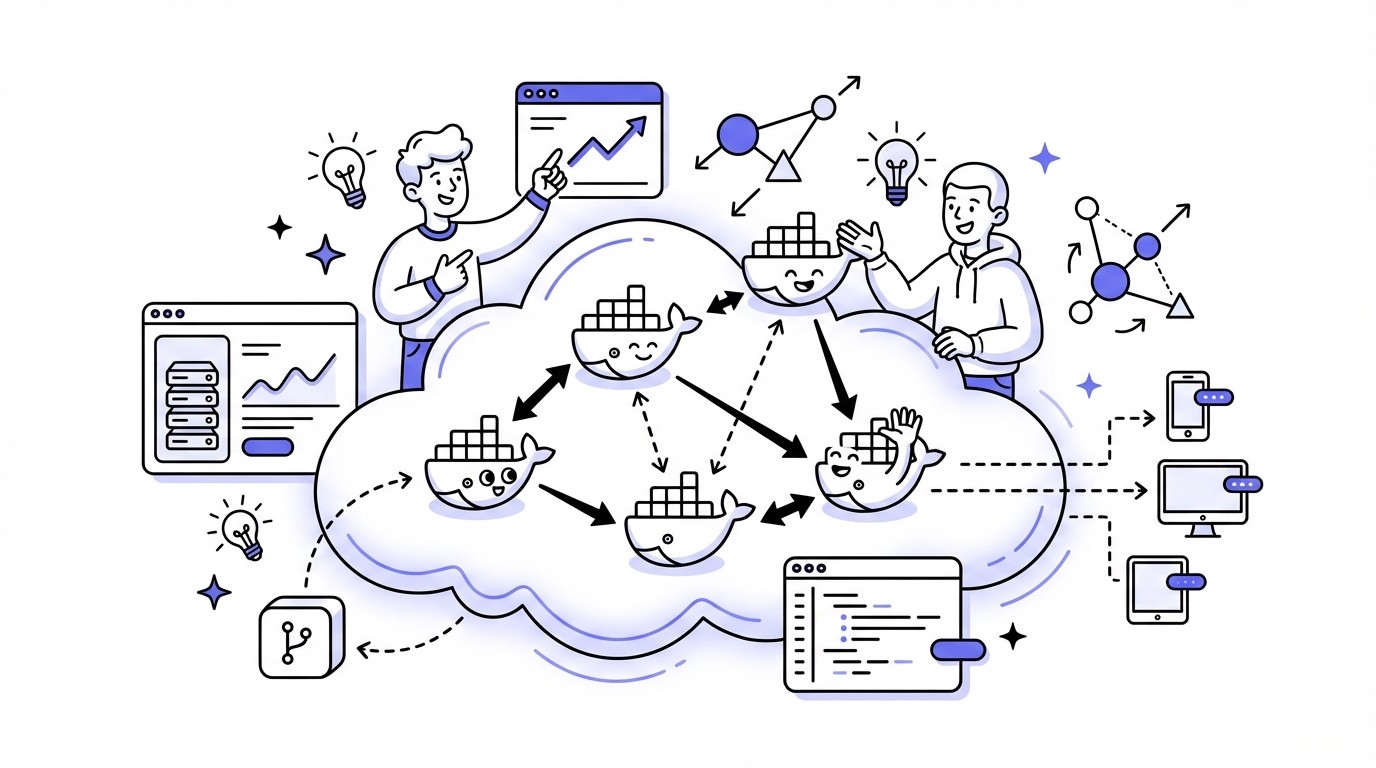

Simplified Diagram of Cloud-Native Architecture Highlighting Containers

Visuals

Frequently Asked Questions

What is an AI startup tech stack and why is it crucial for growth?

An AI startup tech stack is the specific set of technologies and architectural patterns designed to power artificial intelligence applications, differing significantly from standard SaaS infrastructure. Choosing the right stack is crucial because it directly impacts an AI startup's ability to scale, manage costs, and innovate rapidly, forming the foundation for a truly scalable AI infrastructure.

Why are MLOps best practices non-negotiable for AI startups?

Adopting MLOps best practices is essential for AI startups because it automates the entire machine learning lifecycle, from data ingestion to model deployment and retraining. This ensures model reliability, enables rapid iteration, and allows for systematic updates as data evolves, preventing models from degrading and making the process scalable.

How does serverless AI architecture benefit an AI startup's tech stack?

A serverless AI architecture allows an AI startup to run code without managing underlying servers, paying only for the compute time used. This approach significantly reduces operational costs, especially for unpredictable or variable workloads, making it a strategic choice for optimizing resource utilization within the overall AI startup tech stack.

What are the core pillars of a robust and scalable AI infrastructure?

The core pillars of a scalable AI infrastructure are Data, Development, and Deployment. The Data pillar handles ingestion and transformation, the Development pillar facilitates model building and experimentation, and the Deployment pillar ensures efficient model delivery. Getting each of these right is fundamental for a resilient AI startup tech stack.

What role does cloud-native design play in an AI startup's tech stack?

Cloud-native design is a foundational pillar for an AI startup tech stack, utilizing containers like Docker and orchestration tools such as Kubernetes. This approach builds applications specifically for the cloud, ensuring portability, resilience, and efficient resource management. It helps avoid vendor lock-in and supports a flexible, scalable AI infrastructure.

Which data technologies are fundamental for an AI startup's tech stack?

For an AI startup tech stack, a robust data foundation includes diverse technologies. Amazon S3 is ideal for unstructured data like images, while relational databases such as PostgreSQL handle structured user profiles. Critically, vector databases like Pinecone are essential for storing embeddings and powering core AI features like similarity searches and recommendations.

How does a founder's guide tech stack approach for AI differ from traditional SaaS?

A founder's guide tech stack for AI startups differs by prioritizing architectural patterns like cloud-native design, serverless AI architecture, and rigorous MLOps best practices. Unlike traditional SaaS, it specifically addresses the unique demands of AI, focusing on scalable data pipelines, specialized compute (GPUs), and automated model lifecycle management to ensure continuous innovation and growth.

Hop on a call with us to see how our services can accelerate your growth.